You Changed, VMware. You Used To Be Cool.

August 10, 2011 1 Comment

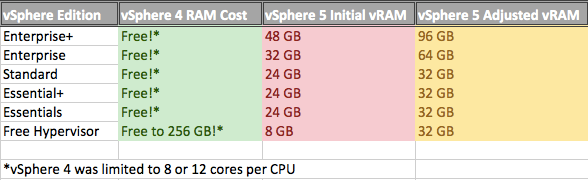

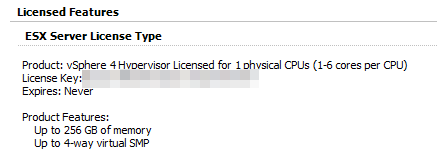

So by now we’ve all heard about VMware’s licensing debacle. The two biggest changes where that VMware would be charging for RAM utilization (vRAM) as well as per CPU, and the free version of ESXi would go from a 256 GB limit to an 8 GB limit.

There was a bit of pushback from customers. A lot, actually. A new term has entered the data center lexicon: vTax, a take on the new vRAM term. VMware sales reps were inundated with angry customers. VMware’s own message boards were swarmed with angry posts. Dogs and cats living together, mass hysteria. And I think my stance on the issue has been, well, unambiguous.

It’s tough to imagine that VMware anticipated that bad of a response. I’m sure they thought there would be some grumblings, but we haven’t seen a product launch go this badly since New Coke.

VMware is in good company

VMware introduced the concept of vRAM, essentially making customers pay for something that had been free. The only bone they threw at customers was the removal of the core limit on processors (previously 6 or 12 cores per CPU depending on the license). However, it’s not more cores most customers need, it’s more RAM. Physical RAM is usually the limiting factor for increasing VM density on a single host, not lack of cores. Higher VM density means lower power, lower cooling, and less hosts.

VMware knew this, so they hit their customers where it counts. The only way to describe this, no matter how VMware tries to spin it, is a price increase.

VMware argued that the vast majority of customers would be unaffected by the new licensing scheme, that they would pay the same with vSphere 5 as they did with vSphere 4. While that might have been true, IT doesn’t think about right now, they think about the next hardware they’re going to order, and that next hardware is dripping with RAM. Blade systems from Cisco and HP have to ability to cram 512 GB of RAM in them. Monster stand-alone servers can take even more, like Oracle’s Sun Fire X4800 can hold up to 2 TB of RAM. And it’s not like servers are going to get less RAM over time. Customers saw their VMware licensing costs increasing with Moore’s Law. We’re supposed to pay less with Moore’s Law, and VMware figured out a way to make us pay more.

So people were mad. VMware decided to back track a bit, and announced adjusted vRAM allotments and rules. So what’s changed?

You still pay for vRAM allotments, but the allotments have been increased across the board. Even the free version of the hypervisor got ups to 32 GB from 8 GB.

Also, the vRAM usage would be based on a yearly average, so spikes in vRAM utilization wouldn’t increase costs so long as they were relatively short lived. vRAM utilization is now capped at 96 GB, so no matter how large a single VM is, it will only count as 96 GB of vRAM used.

Even with the new vRAM allotments, it’s still a price increase

The adjustments to vRAM allotments have helped quell the revolt, and the discussion is starting to turn from vTax to the new features in vSphere 5. The 32 GB limit for the free version of ESXi also made a lot more sense. 8 GB was almost useless (my own ESXi host has 18 GB of RAM), and given what it costs to power and cool even a basement lab, not even worth powering up. 32 GB means a nice beefy lab system for the next 2 years or thereabouts.

What’s Still Wrong

While the updated vRAM licensing has alleviated the immediate crisis, there is some damage done, some of it permanent.

The updated vRAM allotments make more sense for today, and give some room for growth in the future, but it still has a limited shelf life. As servers get more and more RAM over the next several years, vTax will automatically increase. VMware is still tying their liscensing to a deprecating asset in RAM.

That was part of what got people so peeved about the vRAM model. Even if they ran the numbers and it turned out they didn’t need additional licensing from vSphere 4 right now, most organizations had an eye on their hardware refresh cycle, because servers get more and more RAM with each refresh cycle.

VMware is going to have to continue to up vRAM allotments on a regular basis. I find it uncomfortable to know that my licensing costs could increase as exponentially as RAM becomes exponentially more plentiful as time goes on. I don’t doubt that they will increase allotments, but we have no idea when (and honestly, even if) they will.

You Used to be Cool, VMware

The relationship between VMware and its customers base has also been damaged. VMware had incredible goodwill from customers as a vendor, a relationship that was the envy of the IT industry. We had their back, and we felt they had our back. No longer. Customers and the VMware community will now look at VMware with a somewhat wary eye. Not as wary with the vRAM adjustments, but wary still.

I have to imagine that Citrix and Microsoft have sent VMware’s executives flowers with messages like “thank you so much” and “I’m going to name my next child after you”. I’m hearing anecdotal evidence that interest in HyperV and Citrix Xen has skyrocketed, even with the vRAM adjustments. In the Cisco UCS classes I teach, virtually everyone has taken more than just a casual look at Citrix and HyperV.

Ironically Citrix and Microsoft have struggled to break the stranglehold that VMware had on server virtualization (over 80% market share). They’ve tried several approaches, and haven’t been successful. It’s somewhat amusing that it’s a move that VMware made that seems to be loosening the grip. And remember, part of that grip was the loyal user community.

Thank about how significant that is. Before vSphere 5, there was no reason for most organizations to get “wandering eye”. VMware has the most features and a huge install base, plus plenty of resources and expertise around to have made VMware the obvious choice for most . The pricing was reasonable, so there wasn’t a real need to look elsewhere.

Certainly the landscape for both VMware and the other vendors has changed substantially. It will be interesting to see how it all plays out. My impression is that VMware will still make money, but they will lose market share, even if they stay #1 in terms of revenue (since they’re charging more, after all).