Fibre Channel: What Is It Good For?

January 4, 2016 1 Comment

In my last article, I talked about how Fibre Channel, as a technology, has probably peaked. It’s not dead, but I think we’re seeing the beginning of a slow decline. Fibre Channel’s long goodbye is caused by a number of factors (that mostly aren’t related to Fibre Channel itself), including explosive growth in non-block storage, scale-out storage, and interopability issues.

But rather than diss Fibre Channel, in this article I’m going to talk about the advantages of Fibre Channel has over IP/Ethernet storage (and talk about why the often-talked about advantages aren’t really advantages).

Fibre Channel’s benefits have nothing to do with buffer to buffer credits, the larger MTU (2048 bytes), its speed, or even its lossless nature. Instead, Fibre Channel’s (very legitimate) advantages are mostly non-technical in nature.

It’s Optimized Out of the Box

When you build a Fibre Channel-based SAN, there’s no optimization that needs to be done: Fibre Channel comes out of the box optimized for storage (SCSI) traffic. There are settings you can tweak, but most of the time there’s nothing that needs to be done other than set port modes and setup zoning. The same is true for the host HBAs. While there are some knobs you can tweak, for the most part the default settings will get you a highly performant storage network.

It’s possible to build an Ethernet network that performs just as well as a Fibre Channel network. It just typically takes more work. You might need to tune MTU (jumbo frames), tune TCP driver settings,tweak flow control settings, or a several other tweaks. And you need someone that knows what all the little nerd-knobs do on IP/Ethernet networks. In Fibre Channel it’s fire and forget.

It’s an Air-Gapped Network

From host to storage array, Fibre Channel is an air-gapped network in that storage traffic and non-storage traffic would run on completely separate networks. Fibre Channel’s nearly exclusive payload is SCSI, and SCSI as a protocol is far more fragile than other protocols, so running it on a separate network makes sense operationally.

Think about it: If you unplug an Ethernet cable while you’re watching a Youtube video of cats for 5 seconds, and plug it back in, you might see some buffering (and you might not, depending on how much it pre-fetched). If you unplug your hard drive for 5 seconds, well, buffering is going to be the last of your worries.

SCSI is more fragile, so having it on a separate network makes sense.

You’ve Got One Job

Ethernet’s strength is that it is supremely flexible. You can run storage traffic on it, video traffic, voice traffic, animated GIFs of cats, etc. You can run iSCSI, HTTP, SMTP, etc. You can run TCP, UDP, IPv4, IPv6, etc. This does add a bit of complication to the configuration of Ethernet/IP networks, however, in the need for tweaking (QoS, flow control, etc.)

Fibre Channel’s strength is that you’re just doing one type of traffic: SCSI (though there is talk of NVMe over Fibre Channel now). Either way, it’s block storage, and that’s all you’re ever going to run on Fibre Channel. This particular characteristic is one of the reasons that Fibre Channel is optimized out of the box.

Slow To Change

In IT, we’ve usually been pretty terrified of change. Both in terms of the technology that we’re familiar with, and (more specifically) topological or configuration changes. With DevOps/Agile/whateveryouwanttocallit, the later is changing. But not with Fibre Channel. Fibre Channel configurations are fairly static. And for traditional IT operations, that means a very stable setup. This goes along with the air-gapped network, in that we tend to be much more careful with SCSI traffic.

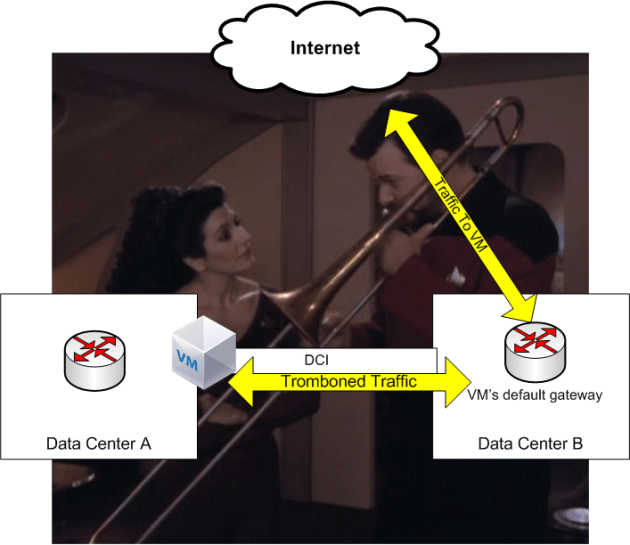

Double Your SAN

Fibre Channel has a rather unique solution to network redundancy: Build two completely separate networks: SAN A and SAN B. Fibre Channel’s job is to provide two independent data paths to from the initiator to the target.

From my article Fibre Channel and Ethernet. Also the greatest SAN diagram ever made.

Most of the redundancy in Fibre Channel is instead provided by the host’s drivers (multi-path driver, or MPIO) and in some cases, the storage array’s controller. Network redundancy, beyond having two separate networks, is not required and often not implemented (though available). While Ethernet/IP networks mesh the hell out of everything, in Fibre Channel it’s strictly forbidden to interconnect the A and B fabrics in any way.

A/B network separation wouldn’t work on a global scale of course, but Fibre Channel wasn’t meant to run a global network: Just a local SAN. As a result, it’s a simple (and effective way) to handle redundancy. Plus, it puts the onus on the host and storage arrays, not us SAN administrators. Our responsibility is simple and clear: Two independent data paths.

Centralized Management

Another advantage is the centralized configuration for zoning and zonesets with Fibre Channel. You create multiple zones, create a zoneset, and voila, that configuration is automatically pushed out to the other switches in the fabric. That saves a lot of time (and configuration errors) by having one connectivity configuration (zone configuration are what allows which initiators to talk to which targets) that is shared among the switches in a given fabric.

In fact, Fibre Channel provides a whole host of fabric services (name, configuration, etc.) that make management of a SAN easy, even if you’re using the CLI. Both Cisco and Brocade have GUI tools if that’s your thing too (I won’t laugh derisively at you, I promise).

In Ethernet/IP networks, each network device is usually a configuration point itself. As a result, we tend not to use IP access lists for iSCSI or NFS security, instead relying on security mechanisms on the hosts and storage arrays. That’s changing with policy-based Ethernet fabrics (such as Cisco ACI) but for the most part, configuring a storage network based on IP/Ethernet is a bit more of a configuration burden.

What Aren’t Fibre Channel’s Strengths

Having said all that, there are a few things that I see people point out to as the strengths of Fibre Channel that aren’t really strengths, in that they don’t provide material benefit over other technologies.

Buffer to buffer credits is one of those features. Buffer to buffer credits allows for a lossless fabric overall by preventing frame drop on a port-by-port basis. But buffer to buffer credits aren’t the only way to provide losslessness. iSCSI provides lossless transport by re-transmitting any loss segments. Converged Ethernet (CE) provides losslessness with PFC (priority flow control) sending PAUSE frames to prevent buffer overruns. Both TCP and CE provide the same effect as buffer to buffer credits: Lossless transport.

So if losslessness is your goal, then there’s more than one way to handle that.

Whether its re-transmitting TCP segments, PAUSE frames, or buffer to buffer credits, congestion is congestion. If you try to push 16 Gigabits through an 8 Gigabit link, something has to give.

The only way a buffer can be overfilled is if there’s congestion. Buffer to buffer credits do not eliminate congestion, they’re just a specific way of dealing with it. Congestion is congestion, and the only solution is more bandwidth.

I’ve got congestion, and the only cure is more bandwidth

Buffer to buffer credits, gigantic buffers, flow control, none of these fix bandwidth issues. If you’re starved of bandwidth, add more bandwidth.

While I think the future of storage will be one without Fibre Channel, for traditional workloads (read VMware vSphere), there is no better storage technology in most cases than Fibre Channel. Its strength is not in its underlying technology or engineering, but in its single-minded purpose and simplicity. Most of Fibre Channel’s benefits aren’t even technological: Instead they’re more of a “Layer 8” benefit. And these are the reasons why Fibre Channel, thus far, has been so successful (and nice to work with).