Peak Fibre Channel

November 23, 2015 8 Comments

There have been several articles talking about the death of Fibre Channel. This isn’t one of them. However, it is an article about “peak Fibre Channel”. I think, as a technology, Fibre Channel is in the process of (if it hasn’t already) peaking.

There’s a lot of technology in IT that doesn’t simply die. Instead, it grows, peaks, then slowly (or perhaps very slowly) fades. Consider Unix/RISC. The Unix/RISC market right now is a caretaker platform. Very few new projects are built on Unix/RISC. Typically a new Unix server is purchased to replace an existing but no-longer-supported Unix server to run an older application that we can’t or won’t move onto a more modern platform. The Unix market has been shrinking for over a decade (2004 was probably the year of Peak Unix), yet the market is still a multi-billion dollar revenue market. It’s just a (slowly) shrinking one.

I think that is what is happening to Fibre Channel, and it may have already started. It will become (or already is) a caretaker platform. It will run the workloads of yesterday (or rather the workloads that were designed yesterday), while the workloads of today and tomorrow have a vastly different set of requirements, and where Fibre Channel doesn’t make as much sense.

Why Fibre Channel Doesn’t Make Sense in the Cloud World

There are a few trends in storage that are working against Fibre Channel:

- Public cloud growth outpaces private cloud

- Private cloud storage endpoints are more ephemeral and storage connectivity is more dynamic

- Block storage is taking a back seat to object (and file) storage

- RAIN versus RAID

- IP storage is as performant as Fibre Channel, and more flexible

Cloudy With A Chance of Obsolescence

The transition to cloud-style operations isn’t a great for Fibre Channel. First, we have the public cloud providers: Amazon AWS, Microsoft Azure, Rackspace, Google, etc. They tend not to use much Fibre Channel (if any at all) and rely instead on IP-based storage or other solutions. And what Fibre Channel they might consume, it’s still far fewer ports purchased (HBAs, switches) as workloads migrate to public cloud versus private data centers.

The Ephemeral Data Center

In enterprise datacenters, most operations are what I would call traditional virtualization. And that is dominated by VMware’s vSphere. However, vSphere isn’t a private cloud. According to NIST, to be a private cloud you need to be self service, multi-tenant, programmable, dynamic, and show usage. That ain’t vSphere.

For VMware’s vSphere, I believe Fibre Channel is the hands down best storage platform. vSphere likes very static block storage, and Fibre Channel is great at providing that. Everything is configured by IT staff, a few things are automated though Fibre Channel configurations are still done mostly by hand.

Probably the biggest difference between traditional virtualization (i.e. VMware vSphere) and private cloud is the self-service aspect. This also makes it a very dynamic environment. Developers, DevOpsers, and overall consumers of IT resources configure spin-up and spin-down their own resources. This leads to a very, very dynamic environment.

Endpoints are far more ephemeral, as demonstrated here by Mr Mittens.

Where we used to deal with virtual machines as everlasting constructs (pets), we’re moving to a more ephemeral model (cattle). In Netflix’s infrastructure, the average lifespan of a virtual machine is 36 hours. And compared to virtual machines, containers (such as Docker containers) tend to live for even shorter periods of time. All of this means a very dynamic environment, and that requires self-service portals and automation.

And one thing we’re not used to in the Fibre Channel world is a dynamic environment.

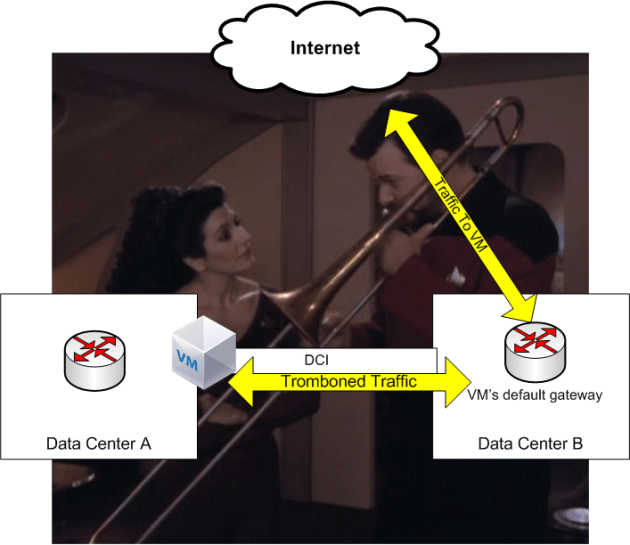

A SAN administrator at the thought of automated zoning and zonesets

A SAN administrator at the thought of automated zoning and zonesets

Virtual machines will need to attach to block storage on the fly, or they’ll rely on other types of storage, such as container images, retrieved from an object store, and run on a local file system. For these reasons, Fibre Channel is not usually a consideration for Docker, OpenStack (though there is work on Fibre Channel integration), and very dynamic, ephemeral workloads.

Objectification

Block storage isn’t growing, at least not at the pace that object storage is. Object storage is becoming the de-facto way to store the deluge of unstructured data being stored. Object storage consumption is growing at 25% per year according to IDC, while traditional RAID revenues seem to be contracting.

Making it RAIN

In order to handle the immense scale necessary, storage is moving from RAID to RAIN. RAID is of course Redundant Array of Inexpensive Disks, and RAIN is Redundant Array of Inexpensive Nodes. RAID-based storage typically relies on controllers and shelves. This is a scale-up style approach. RAIN is a scale-out approach.

For these huge scale storage requirements, such as Hadoop’s HDFS, Ceph, Swift, ScaleIO, and other RAIN handle the exponential increase in storage requirements better than traditional scale-up storage arrays. And primarily these technologies are using IP connectivity/Ethernet as the node-to-node and node-to-client communication, and not Fibre Channel. Fibre Channel is great for many-to-one communication (many initiators to a few storage arrays) but is not great at many-to-many meshing.

Ethernet and Fibre Channel

It’s been widely regarded in many circles that Fibre Channel is a higher performance protocol than say, iSCSI. That was probably true in the days of 1 Gigabit Ethernet, however these days there’s not much of a difference between IP storage and Fibre Channel in terms of latency and IOPS. Provided you don’t saturate the link (neither handles eliminates congestion issues when you oversaturate a link) they’re about the same, as shown in several tests such as this one from NetApp and VMware.

Fibre Channel is currently at 16 Gigabit per second maximum. Ethernet is 10, 40, and 100, though most server connections are currently at 10 Gigabit, with some storage arrays being 40 Gigabit. Iin 2016 Fibre Channel is coming out with 32 Gigabit Fibre Channel HBAs and switches, and Ethernet is coming out with 25 Gigabit Ethernet interfaces and switches. They both provide nearly identical throughput.

Wait, what?

But isn’t 32 Gigabit Fibre Channel faster than 25 Gigabit Ethernet? Yes, but barely.

- 25 Gigabit Ethernet raw throughput: 3125 MB/s

- 32 Gigabit Fibre Channel raw throughput: 3200 MB/s

Do what now?

32 Gigabit Fibre Channel isn’t really 32 Gigabit Fibre Channel. It actually runs at about 28 Gigabits per second. This is a holdover from the 8/10 encoding in 1/2/4/8 Gigabit FC, where every Gigabit of speed brought 100 MB/s of throughput (instead of 125 MB/s like in 1 Gigabit Ethernet). When FC switched to 64/66 encoding for 16 Gigabit FC, they kept the 100 MB/s per gigabit, and as such lowered the speed (16 Gigabit FC is really 14 Gigabit FC). This concept is outlined here in this screencast I did a while back. 16 Gigabit Fibre Channel is really 14 Gigabit Fibre Channel. 32 Gigabit Fibre Channel is 28 Gigabit Fibre Channel.

As a result, 32 Gigabit Fibre Channel is only about 2% faster than 25 Gigabit Ethernet. 128 Gigabit Fibre Channel (12800 MB/s) is only 2% faster than 100 Gigabit Ethernet (12500 MB/s).

Ethernet/IP Is More Flexible

In the world of bare metal server to storage array, and virtualization hosts to storage array, Fibre Channel had a lot of advantages over Ethernet/IP. These advantages included a fairly easy to learn distributed access control system, a purpose-built network designed exclusively to carry storage traffic, and a separately operated fabric. But those advantages are turning into disadvantages in a more dynamic and scaled-out environment.

In terms of scaling, Fibre Channel has limits on how big a fabric can get. Typically it’s around 50 switches and a couple thousand endpoints. The theoretical maximums are higher (based on the 24-bit FC_ID address space) but both Brocade and Cisco have practical limits that are much lower. For the current (or past) generations of workloads, this wasn’t a big deal. Typically endpoints numbered in the dozens or possibly hundreds for the large scale deployments. With a large OpenStack deployment, it’s not unusual to have tens of thousands of virtual machines in a large OpenStack environment, and if those virtual machines need access to block storage, Fibre Channel probably isn’t the best choice. It’s going to be iSCSI or NFS. Plus, you can run it all on a good Ethernet fabric, so why spend money on extra Fibre Channel switches when you can run it all on IP? And IP/Ethernet fabrics scale far beyond Fibre Channel fabrics.

Another issue is that Fibre Channel doesn’t play well with others. There’s only two vendors that make Fibre Channel switches today, Cisco and Brocade (if you have a Fibre Channel switch that says another vendor made it, such as IBM, it’s actually a re-badged Brocade). There are ways around it in some cases (NPIV), though you still can’t mesh two vendor fabrics reliably.

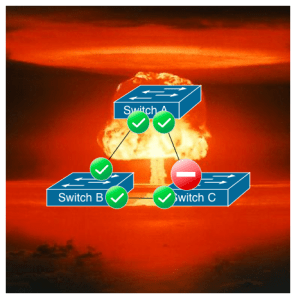

Pictured: Fibre Channel Interoperability Mode

And personally, one of my biggest pet peeves regarding Fibre Channel is the lack of ability to create a LAG to a host. There’s no way to bond several links together to a host. It’s all individual links, which requires special configurations to make a storage array with many interfaces utilize them all (essentially you zone certain hosts).

None of these are issues with Ethernet. Ethernet vendors (for the most part) play well with others. You can build an Ethernet Layer 2 or Layer 3 fabric with multiple vendors, there are plenty of vendors that make a variety of Ethernet switches, and you can easily create a LAG/MCLAG to a host.

My name is MCLAG and my flows be distributed by a deterministic hash of a header value or combination of header values.

What About FCoE?

FCoE will share the fate of Fibre Channel. It has the same scaling, multi-node communication, multi-vendor interoperability, and dynamism problems as native Fibre Channel. Multi-hop FCoE never really caught on, as it didn’t end up being less expensive than Fibre Channel, and it tended to complicate operations, not simplify them. Single-hop/End-host FCoE, like the type used in Cisco’s popular UCS server system, will continue to be used in environments where blades need Fibre Channel connectivity. But again, I think that need has peaked, or will peak shortly.

Fibre Channel isn’t going anywhere anytime soon, just like Unix servers can still be found in many datacenters. But I think we’ve just about hit the peak. The workload requirements have shifted. It’s my belief that for the current/older generation of workloads (bare metal, traditional/pet virtualization), Fibre Channel is the best platform. But as we transition to the next generation of platforms and applications, the needs have changed and they don’t align very well with Fibre Channel’s strengths.

It’s an IP world now. We’re just forwarding packets in it.