Death To vMotion

December 1, 2013 11 Comments

There are very few technologies in that data center that have had as significant of an impact of VMware’s vMotion. It allowed us to de-couple operating system and server operations. We could maintain, update, and upgrade the underlying compute layer without disturbing the VMs they ran on. We can write web applications in the same model that we’re used to, when we wrote them to specific physical servers. From an application developer perspective, nothing needed to be changed. From a system administrator perspective, it helped make (virtual) server administration easier and more flexible. vMotion helped us move almost seamlessly from the physical world to the virtualization world with nary a hiccup. Combined with HA and DRS, it’s made VMware billions of dollars.

And it’s time for it to go.

From a networking perspective, vMotion has reeked havoc on our data center designs. Starting in the mid 2000s, we all of a sudden needed to build huge Layer 2 networking domains, instead of beautiful and simple Layer 3 fabrics. East-West traffic went insane. With multi-layer switches (Ethernet switches that could route as fast as they could switch), we had just gotten to the point where we could build really fast Layer 3 fabrics, and get rid of spanning-tree. vMotion required us to undo all that, and go back to Layer 2 everywhere.

But that’s not why it needs to go.

Redundant data centers and/or geographic diversification is another area that vMotion is being applied to. Having the ability to shift a workload from one data center to another is one of the holy grails of data centers but to accomplish this we need Layer 2 data center interconnects (DCI), with technologies like OTV, VPLS, EoVPLS, and others. There’s also a distance limitation, as the latency between two datacenters needs to be 10 milliseconds or less. And since light can only travel so far in 10 ms, there is a fairly limited distance that you can effectively vMotion (200 kilometers, or a bit over 120 miles). That is, unless you have a Stargate.

You do have a Stargate in your data center, right?

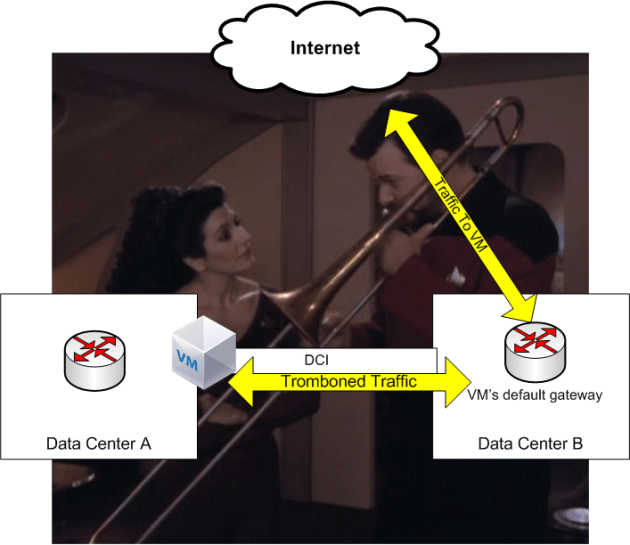

And that’s just getting a VM from one data center to another, which someone described to me once as a parlor trick. By itself, it serves no purpose to move a VM from one data center to another. You have to get its storage over as well (VMDK files if your lucky, raw LUNs if you’re not) and deal with the traffic tromboning problem from one data center to another.

The IP address is still coupled to the server (identity and location are coupled in normal operations, something LISP is meant to address), so traffic still comes to the server via the original data center, traverses the DCI, then the server responds through its default gateway, which is still likely the original data center. All that work to get a VM to a different data center, wasted.

All for one very simple reasons: A VM needs to keep its IP address when it moves. It’s IP statefullness, and there are various solutions that attempt to address the limitations of IP statefullness. Some DCI technologies like OTV will help keep default gateways to the local data center, so when a server responds it at least doesn’t trombone back through the original data center. LISP is (another) overlay protocol meant to decouple the location from the identity of a VM, helping with mobility. But as you add all these stopgap solutions on top of each other, it becomes more and more cumbersome (and expensive) to manage.

All of this because a VM doesn’t want to give its IP address up.

But that isn’t the reason why we need to let go of vMotion.

The real reason why it needs to go is that it’s holding us back.

Do you want to really scale your application? Do you want to have fail-over from one data center to another, especially over distances greater than 200 kilometers? Do you want to be be able to “follow the Sun” in terms of moving your workload? You can’t rely on vMotion. It’s not going to do it, even with all the band-aids meant to help it.

The sites that are doing this type of scaling are not relying on vMotion, they’re decoupling the application from the VM. It’s the metaphor of pets versus cattle (or as I like to refer to it, bridge crew versus redshirts). Pets is the old way, the traditional virtualization model. We care deeply what happens to a VM, so we put in all sorts of safety nets to keep that VM safe. vMotion, HA, DRS, even Fault Tolerance. With cattle (or redshirts), we don’t really care what happens to the VMs. The application is decoupled from the VM, and state is not solely stored on a single VM. The “shopping cart” problem, familiar to those who work with load balancers, isn’t an issue. So a simple load balancer is all that’s required, and can send traffic to another server without disrupting the user experience. Any VM can go away at any level (database, application, presentation/web layer) and the user experience will be undisturbed. We don’t shed a tear when a redshirt bites it, thus vMotion/HA/DRS are not needed.

If you write your applications and build your application stack as if vMotion didn’t exit, scaling and redundancy are geographic diversification get a lot easier. If your platform requires Layer 2 adjacency, you’re doing it wrong (and you’ll be severely limited in how you can scale).

And don’t take my word for it. Take a look at any of the huge web sites, Netflix, Twitter, Facebook: They all shard their workloads across the globe and across their infrastructure (or Amazons). Most of them don’t even use virtualization. Traditional servers sitting behind a load balancer with a active/standby pair of databases on the back-end isn’t going to cut it.

When you talk about sharding, make sure people know it’s spelled with a “D”.

If you write an application on Amazon’s AWS, you’re probably already doing this since there’s no vMotion in AWS. If an Amazon data center has a problem, as long as the application is architected correctly (again, done on the application itself), then I can still watch my episodes of Star Trek: Deep Space 9. It takes more work to do it this way, but it’s a far more effective way to scale/diversify your geography.

It’s much easier (and quicker) to write a web application for the traditional model of virtualization. And most sites first outing will probably be done in this way. But if you want to scale, it will be way easier (and more effective) to build and scale an application.

VMware’s vMotion (and Live Migration, and other similar technologies by other vendors) had their place, and they helped us move from the physical to the virtual. But now it’s holding us back, and it’s time for it to go.

I wouldn’t agree that vMotion is holding us back or that it’s preventing applications from scaling. For local datacenter availability and management it provides a valuable service. For inter-datacenter then that’s where you need the scale built into the applications. But both are complimentary. I built an application architecture in 2007 for a large financial system that distributed the application across multiple datacenters that was always on. If we lost a single or even multiple components it made no difference, the application just kept going and the users were none the wiser. This was virtualized and leveraged vMotion, HA, DRS etc at each local site, but we didn’t use that inter-site due to the distance (400 miles), cost of and amount of bandwidth (only had 200Mb/s), and there were many more appropriate solutions. I don’t think this has changed. It was far easier, far less complex, and far less costly to build in availability and scale to the application than trying to stretched the datacenter. But we would have been far worse off had it not been for virtualization and the ability to use vMotion, HA and DRS. The costs would have been many tens of millions of dollars more without these essential VMware technologies. So I strongly disagree that vMotion needs to die, in fact I think we need to leverage the best that the infrastructure has to offer at a local datacenter level, while taking a common sense approach to application scale and availability. Besides, not every application needs this scale, and you should be designing and architecting to the requirements.

Pingback: | Standalone Sysadmin

software defined networking is supposed to alleviate move of the problems you are describing by decoupling the addressing from the infrastructure. this solves the age old “but I can’t merge two networks together without redoing everything”, which limits application mobility. this is a legacy from the old ways of coupling the web server with the IP addressing and causes all kinds of headaches when trying to migrate multitenant VMs to another location.

if anything its the fault of the applications, which are built without virtualization in mind, rather than vMotion or Hyper-V or whatever.

The fatal flaw is trying to trick legacy crap into thinking that its running on dedicated hardware. The future of virt should and would involve deep hooks from the host down to the application/service on the guest. (Hyper-V has some notions of this ie VSS).

I believe that your article should say the perception of vMOTION is holding us back. Vmotion Is great for certain areas such as small to mid size companies who need reliable infrastructure and the ability to move that area from one data center to another. Most people believe that vMOTION is only solution for moving VMs and not considering a better application design due to the fact that human/monetary resources aren’t available to consider a redesign. Most apps out of the box are for using with standard Virtual techniques which works well until larger firms can build a VM based solution that is free or affordable.

When you building apps in a cloud or internet then vMotion isn’t the right tool for moving the infrastructure without some barriers. But to move forward, the perception must change.

In one post you said something valid and something invalid. Let’s break this up.

“The fatal flaw is trying to trick legacy crap into thinking that its running on dedicated hardware. The future of virt should and would involve deep hooks from the host down to the application/service on the guest. (Hyper-V has some notions of this ie VSS).”

First, it’s not just “legacy crap”. Many high transactional, heavy volume, heavy processing applications (often built on C++ or C#/WPF) need “on the bone” access. Not the illusion of it. The need is real and should not be ramped up assumptively by VMWare or any other virtualization application. That’s actually doable, but it contradicts what the virtualization sales pitch is.

A top-tier content management solution will require this access to run at peak. It does not require it to run. Any real OCR solution will require it to run at peak. Does not require it to run.

The question providers need to ask is, is all we care about “just get by”? Or do we truly want the best out of the platforms we’re giving to the users? Are we fine making our (IT) lives easier at the expense of user expectations of performance? Because that’s not a company I’d work for. Solutions should benefit users, not IT.

“this is a legacy from the old ways of coupling the web server with the IP addressing and causes all kinds of headaches when trying to migrate multitenant VMs to another location.

if anything its the fault of the applications, which are built without virtualization in mind, rather than vMotion or Hyper-V or whatever.”

First, the IP addressing situation has nothing to do with web, and everything to do with the NIC, virtual or otherwise. Blame Microsoft, or Apple, or Unix, not “web”. Because even a non-web server that needs some level of connectivity, requires an IP address and ideally, a static one. Means your subnetting needs to make sense. Now, the trick is to consider load balancing such that when these changes are made across subnets, the underlying application doesn’t care if the IP needed to change. Load balancers are expensive, but they avoid the issue you’re talking about.

It’s not the application’s fault. It’s a slave. It’s VMWare/Microsoft’s fault for not fixing the problem of how to ensure a stateful situation when you’re moving files across the network. For simple web service stateless applications like an intranet site, VMotion is fine. The HTTP protocol is designed to “wait” for a reply, no matter how long that takes. But when you’re talking direct connect applications that use heavy database traffic, client/server interactions, and usability, there simply can be zero disruption. That’s a virtualization problem, not an application problem.

Pingback: Software Defined Fowarding: What’s In A Name? | The Data Center Overlords

Pingback: Revue de l'actualité Télécom, IT & Lean | Setec IS

Pingback: Changing Data Center Workloads | The Data Center Overlords

Interesting. How would you propose to distribute load across physical servers locally within a data centre?

Nope. Go Fish.

You, uhh, gonna back that up with anything?