Cisco ACE: Insert Client IP Address

May 17, 2012 6 Comments

Source-NAT (also referred to as one-armed mode) is a common way of implementing load balancers into a network. It has several advantages over routed-mode (where the load balancer is the default gateway of the servers), most importantly that the load balancer doesn’t need to be Layer 2 adjacent/on the same subnet as the servers. As long as the SNAT IP address of the load balancer has bi-directional communication with the IP address of the servers, the load balancer can be anywhere. A different subnet, a different data center, even a different continent.

However, one drawback is that with Source NAT the client’s IP address is obscured. The server’s logs will show only the IP address of the SNAT address(s).

There is a way to remedy that if the traffic is HTTP/HTTPS, and that’s by having the load balancer insert the true source IP address into the HTTP request header from the client. You can do it with the ACE by putting it into the load balance policy-map.

policy-map type loadbalance http first-match VIP1_L7_POLICY

class class-default

serverfarm FARM1

insert-http x-forwarded-for header-value "%is"

But alone is not enough. There are two extra steps you need to take.

The first step is you need to tell the web server to log the x-forwarded-for. For Apache, it’s a configuration file change. For IIS, you need to run an ISAPI filter in IIS.

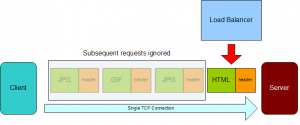

The other thing you need to do is fix the ACE’s attention span. You see, by default the ACE has a short attention span. The HTTP protocol allows you to make multiple HTTP requests on a single TCP connection. By default, the ACE will only evaluate/manipulate the first HTTP request in a TCP connection.

So your log files will look like this:

1.1.1.1 "GET /lb/archive/10-2002/index.htm" - "GET /lb/archive/10-2003/index.html" - "GET /lb/archive/05-2004/0100.html HTTP/1.1" 2.2.2.2 "GET /lb/archive/10-2007/0010.html" - "GET /lb/archive/index.php" - "GET /lb/archive/09-2002/0001.html"

The “-” indicates Apache couldn’t find the header, because the ACE didn’t insert it. The ACE did add the first source IP address, but every request after it in the same TCP connection was ignored.

Why does the ACE do this? It’s less work for one, only evaluating/manipulating the first request in a connection. Since browsers will make dozens or even hundreds of requests over a single connection, this would be a significant saving of resources. After all, most of the time when L7 configurations are used, it’s for cookie-based persistence. If that’s the case, all the requests in the same TCP connection are going to contain the same cookies anyway.

How do you fix it? By using a very ill-named feature called persistence-rebalance. This gives the ACE a longer attention span, telling the ACE to look at every HTTP request in the TCP connection.

First, create an HTTP parameter-map.

parameter-map type http HTTP_LONG_ATTENTION_SPAN persistence-rebalance

Then apply the parameter-map to the VIP in the multi-match policy map.

policy-map multi-match VIPsOnInterface

class VIP1

loadbalance vip inservice

loadbalance policy VIP1_L7_POLICY

appl-parameter http advanced-options HTTP_LONG_ATTENTION_SPAN

When that happens, the IP address will show up in all of the log entries.

1.1.1.1 "GET /lb/archive/10-2002/index.htm" 2.2.2.2 "GET /lb/archive/10-2003/index.html" 1.1.1.1 "GET /lb/archive/05-2004/0100.html HTTP/1.1" 2.2.2.2 "GET /lb/archive/10-2007/0010.html" 1.1.1.1 "GET /lb/archive/index.php" 2.2.2.2 "GET /lb/archive/09-2002/0001.html"

But remember, configuring the ACE (or load balancer in general) isn’t the only step you need to perform. You also need to tell the web service (Apache, Nginx, IIS) to use the header as well. None of them automatically use the X-Forwarded-for header.

I don’t know if they’ll try to trick you with this in the CCIE Lab, but it’s something to keep in mind for the CCIE and for implementations.