OpenFlow’s Awkward Teen Years

April 12, 2012 2 Comments

During the Networking Field Day 3 (The Nerdening) event, I attended a presentation by NEC (yeah, I know, turns out they make switches and have a shipping OpenFlow product, who knew?).

This was actually the second time I’ve seen this presentation. The first was at Networking Field Day 2 (The Electric Boogaloo), and my initial impressions can be found on my post here.

So what is OpenFlow? Well, it could be a lot of things. More generically it could be considered Software Defined Networking (SDN). (All OpenFlow is SDN, but not all SDN is OpenFlow?) It’s adding a layer of intelligence to a network that we have previously lacked. On a more technical level, OpenFlow is a way to program the forwarding tables of L2/L3 switches using a standard API.

Rather than each switch building their own forwarding tables through MAC learning, routing protocols, and policy-based routing, the switches (which could be from multiple vendors) are brainless zombies, accepting forwarding table updates from a maniacal controller that can perceive the entire network.

This could be used for large data centers, large global networks (WAN), but the NEC demonstration was a data center fabric representation of OpenFlow. In some respects, it’s similar to Juniper’s QFabric and Brocade’s VCS. They all have a boundary, and the connections to networks outside of that boundary is done through traditional routing and switching mechanisms, such as OSPF or MLAG (Multi-chassis Link Aggregation). Juniper’s QFabric is based on Juniper’s own proprietary machinations, Brocade’s VCS is based on TRILL (although not [yet?] interopable with other TRILL implementations), and NEC’s OpenFlow is based on, well, OpenFlow.

While it was the same presentation, the delegates (a few of us had seen the presentation before, most had not) were much tougher on NEC than we were last time. Or at least I’m sure it seemed that way. The fault wasn’t NEC, it was the fact that understanding of Openflow and its capabilities have increased, and that’s the awkward teen years of any technology. We were poking and prodding, thinking of new uses and new implications, limitations and caveats.

As we start to understand a technology better, we start to see it’s potential, as well as probe it’s potential drawbacks. Everything works great in PowerPoint, after all. So while our questions seemed tougher, don’t take that as a sign that we’re souring on Openflow. We’re only tough because we care.

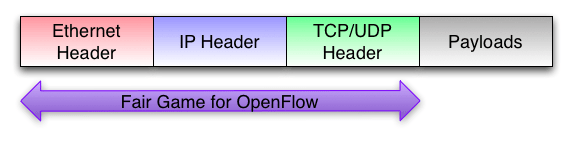

One thing that OpenFlow will likely not be is a way to manipulate individual flows, or at least all individual flows. The “flow” in Openflow does not necessary (and in all likelihood would rarely, if ever) represent an individual 6 tuple TCP connection or UDP flow (a source and destination MAC, IP, and TCP/UDP port). One of the common issues that Openflow haters/doubters/skeptics have brought up that an Openflow controller can only program about 700 flows per second into a switch. That’s certainly not enough to handle a site that may see hundreds of thousands of new TCP connections/UDP flows per second.

But that’s not what Openflow is meant to do, nor is that a huge issue. Think about routing protocols and Ethernet forwarding mechanisms. Neither handle deal with specific flows, only general flows (all traffic to a particular MAC address goes to this port, this /8 network goes out this interface, etc.). OpenFlow isn’t any different. So a limit of 700 flows per second per switch? Not a big deal.

OpenFlow is another way the build an Ethernet fabric, which means offering services and configurations beyond just a Layer 2/Layer 3 network.

Think of firewalls as how we deal with them now. Scaling is an issue, so they’re often choke points. You have to direct traffic in and out of them (NAT, transparent mode, routed mode), and they’re often deployed in pairs. N+1 firewalls is not that common (and often a huge pain in the ass to configure, although it’s been a while). With Openflow (or SDN in general) it’s possible to define an endpoint (VM or physical) and say that endpoint requires flows pass through a firewall. Since we can steer flows (again, not on a per-individual flow basis, but general catch-most rules) scaling a firewall isn’t an issue. Need more capacity? Throw another firewall/IPS/IDS on the barbie. Openflow could put in forwarding rules on the switches and steer flows between active/active/active firewalls. These services could also be tied to the VM, and not the individual switch port (which is a characteristic of a fabric).

Myself, I’m all for any technology that abolishes spanning-tree/traditional Layer 2 forwarding. It’s an assbackwards way of doing things, and if that’s how we ran Layer 3 networks, networking administrators would have revolted by now. (I’m surprised more network administrators aren’t outraged by spanning-tree, but that’s another blog post).

NEC’s OpenFlow (and OpenFlow in general) is an interesting direction in the effort to make network more intelligent (aka “fabrics”). Some vendors are a bit dismissive of OpenFlow, and of fabrics in general. My take is that fabrics are a good thing, especially with virtualization. But more on that later. NEC’s OpenFlow product is interesting, but like any new technology it’s got a lot to prove (and the fact that it’s NEC means they have even more to prove).

Disclaimer: I was invited graciously by Stephen Foskett and Greg Ferro to be a delegate at Networking Field Day 3. My time was unpaid. The vendors who presented indirectly paid for my meals and lodging, but otherwise the vendors did not give me any compensation for my time, and there was no expectation (specific or implied) that I write about them, or write positively about them. I’ve even called a presenter’s product shit, because it was. Also, I wrote this blog post mostly in Aruba while drunk.