I started out my career as a condescending Unix administrator, and while I’m not a Unix administrator anymore, I’m still quite condescending. In the past, I’ve run data centers based on Linux, FreeBSD, Solaris, as well as administered Windows boxes, OpenBSD and NetBSD, and even NeXTSTEP (best desktop in the 90s).

In my role as a network administrator (and network instructor), this experience has become invaluable. Why? One reason is that most networking devices these days have an open sourced based operating system as the underlying OS.

And recently, I got into a discussion on Twitter (OK, kind of a twitter fight, but it’s all good with the other party) about the underlying operating systems for these network devices, and their relevance. My position? The underlying OS is mostly irrelevant.

First of all, the term OS can mean a great many things. In the context of this post, when I talk about OS I’m referring to only the underlying OS. That’s the kernel, libraries, command line, drivers, networking stack, and file system. I’m not referring to the GUI stack (GNOME, KDE, or Unity for the Unixes, Mac OS X’s GUI stack, Win32 for Window) or other types of stack such as a web application stack like LAMP (Linux, Apache, MySQL, and PHP).

Most routers and MLS (multi-layer switches, swtiches that can route as fast as they can switch) run an open source operating system as its control plane. The biggest exception is of course Cisco’s IOS, which is proprietary as hell. But IOS has reached its limits, and Cisco’s NX-OS, which runs on Cisco’s next-gen Nexus switches, is based on Linux. Arista famously runs Linux (Fedora Core) and doesn’t hide it from the users (which allows it to do some really cool things). Juniper’s Junos is based on FreeBSD.

In almost every case of router and multi-layer switch however, the operating system doesn’t forward any packets. That is all handled in specialized silicon. The operating system is only responsible for the control plane, running processes like an OSPF, spanning-tree, BGP, and other services to decide on a set of rules for forwarding incoming packets and frames. These rules, sometimes called a FIB (Forwarding Information Base), are programmed into the hardware forwarding engines (such as the much-used Broadcom Trident chipset). These forwarding engines do the actual switching/routing. Packets don’t hit the general x86 CPU, they’re all handled in the hardware. The control plane (running as various coordinated processes on top of a one of these open source operating systems) tells the hardware how to handle packets.

So the only thing the operating system does (other than the occasional punted packet) is tell the hardware how to handle traffic the general CPU will never see. This is the way it has to be, because x86 hardware can’t scale nearly as well as special purpose silicon can, especially considering power and cooling consumption. Latency is way lower as well.

In fact, hardware wise, most vendors (Juniper, Arista, Huawei, Alcatel-Lucent ,etc.) have been using the exact same chip in their latest switches. So the differentiation isn’t the silicon. Is the differentiation the underlying operating system? No, it makes little difference for the end user. They are instead a (mostly) invisible platform for which the services (CLI, APIs, routing protocols, SDN hooks, etc.) are built upon. Networking vendors are in the middle of a transition into software developers (and motherboard gluers).

All you need to create a 10 Gigabit Switch

The biggest holdout in networking devices and non-open source is of course, Cisco’s IOS, which is proprietary as hell. Still, the future for Cisco appears to be NX-OS running on all of the Nexus switches, and that’s based on Linux.

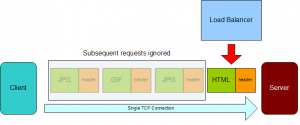

Let’s also take a look at networking devices where the underlying OS may actually touch the data plane, and a genre in which I’m very much acquatned with: Load balancers (and no, I’m not calling them Application Delivery Controllers).

F5’s venerable BIG-IPs used to be based on BSDI initially (a years-dead BSD), and then switched to Linux. CoyotePoint was based on FreeBSD, and is now based on NetBSD. Cisco’s ACE is based on Linux (although Cisco’s shitty CSS runs proprietary vxWorks, but it’s not shitty because of vxWorks). Most of the other vendors are based on Linux. However, the baseline operating system makes very little difference these days.

Most load balancers have SSL offload (to push the CPU-intensive asymmetric encryption onto a specialized processor). This is especially important as we move to 2048-bit SSL certificates. Some load balancers have Layer 2/3/4 silicon (either ASICs or FPGAs, which are flexible ASICs) to help out with forwarding traffic, and hit general CPUs (usually x86) for the Layer 7 parsing. So does the operating system touch the traffic going through a load balancer? Usually, not always, and well, it depends.

So with Cisco on Linux and Juniper with FreeBSD, would either company benefit from switching to a different OS? Does either company enjoy a competitive advantage by having chose their respective platform? No. In fact, switching platforms would likely be a colossal waist of time and resources. The underlying operating systems just provide some common services to run the networking services that program the line cards and silicon.

When I brought up Arista and their Fedora Core-based control plane which they open up to customers, here’s what someone (a BSD fan) described Fedora as: “Inconsistent and convoluted”, “building/testing/development as painful”, and “hasn’t a stable file system after 10 years”.

Reading that statement, you’d think that dealing with Fedora is a nightmare. That’s not remotely true. Some of that statement is exaggeration (and you could find specific examples to support that statement for any operating system) and some of it is fantasy. No stable file system? Linux has had several file systems, including ext2, ext3, ext4, XFS, and more for a while, and they’ve been solid.

In a general sense, I think the operating system is less relevant than it used to be. Take OpenBSD for example. It’s well deserved reputation for security is legendary. Still, would there be any advantage today to running your web application stack on OpenBSD? Would your site be any more secure? Probably not. Not because OpenBSD is any less secure today than it was a while ago, quite the opposite. It’s because the attack vectors have changed. The attacks are hitting the web stack and other pieces rather than the underlying operating system. Local exploits aren’t that big of deal because few systems let anyone but a few users log in anyway. The biggest attacks lately have come from either SQL injection or attacks on desktop operating systems (mostly Windows, but now recently Apple as well).

If you’re going to expose a server directly to the Internet on a DMZ or (gasp) without any firewall at all, OpenBSD is an attractive choice. But that doesn’t happen much anymore. Servers are typically protected by layers of firealls, IPS/IDS, and load balancers.

Would Android be more successful or less successful if Google switched from Linux as the underpinnings to one of the BSDs? Would it be more secure if they switched to OpenBSD? No, and it would it be an entirely wasted effort. It’s not likely any of the security benefits of OpenBSD would translate into the Dalvik stack that is the heart of Android.

As much as fanboys/girls don’t want to admit it, it’s likely the number one reason people choose an OS is familiarity. I tend to go with Linux (although I have FreeBSD and OpenBSD-based VMs running in my infrastructure) because I’m more familiar with it. For my day to day uses, Linux or FreeBSD would both work. There’s not a competitive advantage either have over each other in that regard. Linux outright wins in some cases, such as virtualization (BSDs have been very behind in that technology, though they run fine as guests), but for most stuff it doesn’t matter. I use FreeNAS, which is FreeBSD based, but I don’t care what it runs. I’d use FreeNAS if it were based on Linux, OpenBSD, or whatever. (Because it’s based on FreeBSD, FreeNAS does run ZFS, which for some uses is better than any of the Linux file systems, although I don’t run FreeNAS’s ZFS since it’s missing encryption).

So fanboy/girlism aside, for the most part today, choice of an operating system isn’t the huge deal it may once have been. People succeed with using Linux, FreeBSD, OpenBSD, NetBSD, Windows, and more as the basis for their platforms (web stack, mobile stack, network device OS, etc.).