I’ve got about four or five articles on SDN/ACI and networking in my drafts folder, and there’s something that’s been bothering me. There’s a concept, and it doesn’t have a name (at least one that I’m aware of, though it’s possible there is). In networking, we’re constantly flooded with a barrage of new names. Sometimes we even give things a name that already have a name. But naming things is important. It’s like a programming pointer, a name of something will be a pointer to a specific part of our brain that contains the understanding a of a given concept.

Software Defined Forwarding

I decided SDF needed a name because I was having trouble describing a concept, a very important one, in reference to SDN. And here’s the concept, with three key aspects:

- Forwarding traffic in a way that is different than traditional MAC learning/IP forwarding.

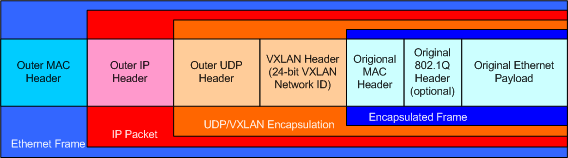

- Forwarding traffic based on more than the usual Layer 2 and Layer 3 headers (such as VXLAN headers, TCP and/or UDP headers).

- Programming these forwarding rules via a centralized controller (which would be necessary if you’re going to throw out all the traditional forwarding rules and configure this in any reasonable amount of time).

- In the cases of Layer 2 adjancencies, multipathing is inherent (either through overlays or something like TRILL).

Throwing out traditional rules of forwarding is what OpenFlow does, and OpenFlow got a lot of the SDN ball rolling. However, SDN has gone far beyond just OpenFlow, so it’s not fair to call it just OpenFlow anymore. And SDN is now far too broad of a term to be specific to fowarding rules, as SDN encompasses a wide range of topics, from forwarding to automation/orchestration, APIs, network function virtualization (NFV), stack integration (compute, storage), etc. If you take a look at the shipping SDN product listing, many of the products don’t have anything to do with actually forwarding traffic. Also something like FabricPath, TRILL, VCS, and SPB all do Ethernet forwarding in a non-standard way, but this is different. So herein lies the need I think to define a new term.

And make no mistake, SDF (or whatever we end up calling it) is going to be an important factor in what pushes SDN from hype to implementation.

Forwarding is simply how we get a packet from here to there. In traditional networking, forwarding rules are separate by layer. Layer 2 Ethernet forwarding works in one particular way (and not a particularly intelligent way), IP routing works in another way. Layer 4 (TCP/UDP) gets a little tricky, as switches and routers typically don’t forward traffic based on Layer 4 headers. You can do something like policy based routing, but it’s a bit combersome to setup. You also have load balancers handling some Layer 4-7 features, but that’s handled in a separate manner.

So traditional forwarding for Layer 2 and Layer 3 hasn’t changed much. For instance, take the example of a server plugging into a switch and powering up. Its IP address and MAC address haven’t been seen on the network, so the network is unaware of both the Layer 2 and Layer 3 addresses. The server is plugged it into a switch with a port set for the right VLAN. The server wants to send a packet out to the internet, so it ARPs (WHO HAS 1.1.1.1, for example). The router (or more likely, SVI) responds with its MAC address, or the floating MAC (VMAC) of the first-hop redundancy protocol, such as VRRP or HSRP. Every switch attached to that VLAN sees this ARP and ARP response, and every switches Layer 2 forwarding table learned which port to find that particular MAC address on.

In several of the SDF implementations that I’m familiar with, such as Cisco’s ACI, NEC’s OpenFlow controller, and to a certain degree Juniper’s QFabric, things get a little weird.

Forwarding in SDF/SDN, Gets A Little Weird

In the SDF (or many implementations of SDF, they differ in how they accomplish this) the local TOR/leaf switch is what answers the server’s ARP. Another server, on the same subnet and L2 segment (or increasingly a tenant), ARPs on a different leaf switch.

In the diagram above, note both servers have a default gateway of 1.1.1.1. The two servers are Layer 2 adjacent, existing on the same network segment (same VLAN or VXLAN). Both ARP for their default gateways, and both receive a response from their local leaf switch with an identical MAC address. Neither server would actually see the other’s ARP, because there’s no need to flood that ARP traffic (or most other BUM traffic) beyond the port that the server is connected to. BUM traffic goes to the local leaf, and the leaf can learn and forward in a more intelligent manner. The other leaf nodes don’t need to see the flooded traffic, and a centralized controller can program the forwarding tables of the leafs and spines accordingly.

In most cases, the packet’s forwarding decision, based on a combination of Layer 2, 3, 4 and possibly more (such as VXLAN) is made at the leaf. If it’s moving past a Layer 3 segment, the TTL gets decremented. The forwarding is typically determined at the leaf, and some sort of label or header applies that contains its destination port.

You have this somewhat with a full Layer 3 leaf/spine mesh, in that the local leaf is who answers the ARP. However, in a Layer 3 mesh hosts connected to different leaf switches are on different network segments, and the leaf is the default gateway (without getting werid). In some applications, such as Hadoop, that’s fine. But for virtualization (unfortunately) there’s a huge need for Layer 2 adjacencies. For now, at least.

Another benefit of SDF is the ability to intelligently steer traffic through various network devices, known as service chaining. This is done without changing default gateways of the servers, bridging VLANs and proxy arp, or other current methodologies. Since SDF throws out the rulebook in terms of forwarding, it becomes a much simpler matter to perform traffic steering. Cisco’s ACI does this, as does Cisco vPath and VMware’s NSX.

A policy, programmed on a central controller, can be put in place to ensure that traffic forwards through a load balancer and firewall. This also has a lot of potential in the realm of multi-tenancy and network function virtualization. In short, combined with other aspects of SDN, it can change the game in terms of how network are architected in the near future.

SDF is only a part of SDN. By itself, it’s compelling, but as there have been some solutions on the market for a little while, it doesn’t seem to be “must have” to the point where customers are upending their data center infrastructures to replace everything. I think for it to be a must have, it needs to be a part